Defense in Depth as Code: Agent Driven Application Security

31 March 2026This article focuses on how code review, verification and compliance can be (partly) automated during development using support from AI-agents.

It is a continuation of the topic of using architectural code patterns for building APIs that are secure by design, implementing patterns from the book Secure by Design and aligned with OWASP ASVS requirements.

The first article (Defense in Depth as Code: Secure APIs by design) implemented a secure API built according to the six-step model presented in Secure APIs.

A follow-up article (Defense in Depth as Code: Test Driven Application Security) focused on automated tests to verify that API defenses are implemented correctly, mitigating, for example, OWASP TOP 10 for APIs issues.

This article takes a look at the code review process, and how AI-agents can be leveraged to improve security.

Together the three articles show how to build APIs which are both secure and compliant by design.

All code, including agent instructions, is available at our GitHub repository, and we encourage you to take a closer look and try it out at https://github.com/Omegapoint/defence-in-depth.

Daniel Sandberg, Omegapoint

There are many ways to implement agents. The code here is just one way to do it, not a template that will fit everyone. But hopefully it is easy to see how this can be adapted to your context.

Security Code Reviews

If you ask 10 developers for a code review, they will identify different issues, and many will miss security concerns like broken access control and lack of input validation.

How can a DevOps team in their daily work assert that new features do not introduce vulnerabilities, that security bugs get caught before deploy to production? What are the key questions to ask in a code review? And how can we show that the application code also aligns with requirements from compliance, well-known best practices and internal security policies as the application evolves?

To answer these questions we start with a simplified DevOps flow. There is a code change, some kind of review and then, perhaps going back between change-review a few times, it gets deployed.

This can be (almost) fully automatic, using CI/CD pipelines and AI-agents to automate all steps, or it could be mostly manual. In either case, a review step at some point is needed to assert good quality before deploy.

A key aspect of high-quality reviews is that they are performed by someone other than the developer making the code change, bringing a different perspective.

While there are many perspectives and aspects that are important to review — for example, performance, architectural patterns, coding best practices and security — this article focuses on the confidentiality and integrity aspects for API endpoint security.

To verify web application and API security, OWASP provides a great resource. It is called OWASP Application Security Verification Standard (ASVS) and contains actionable requirements to apply, both for developers, testers and security specialists.

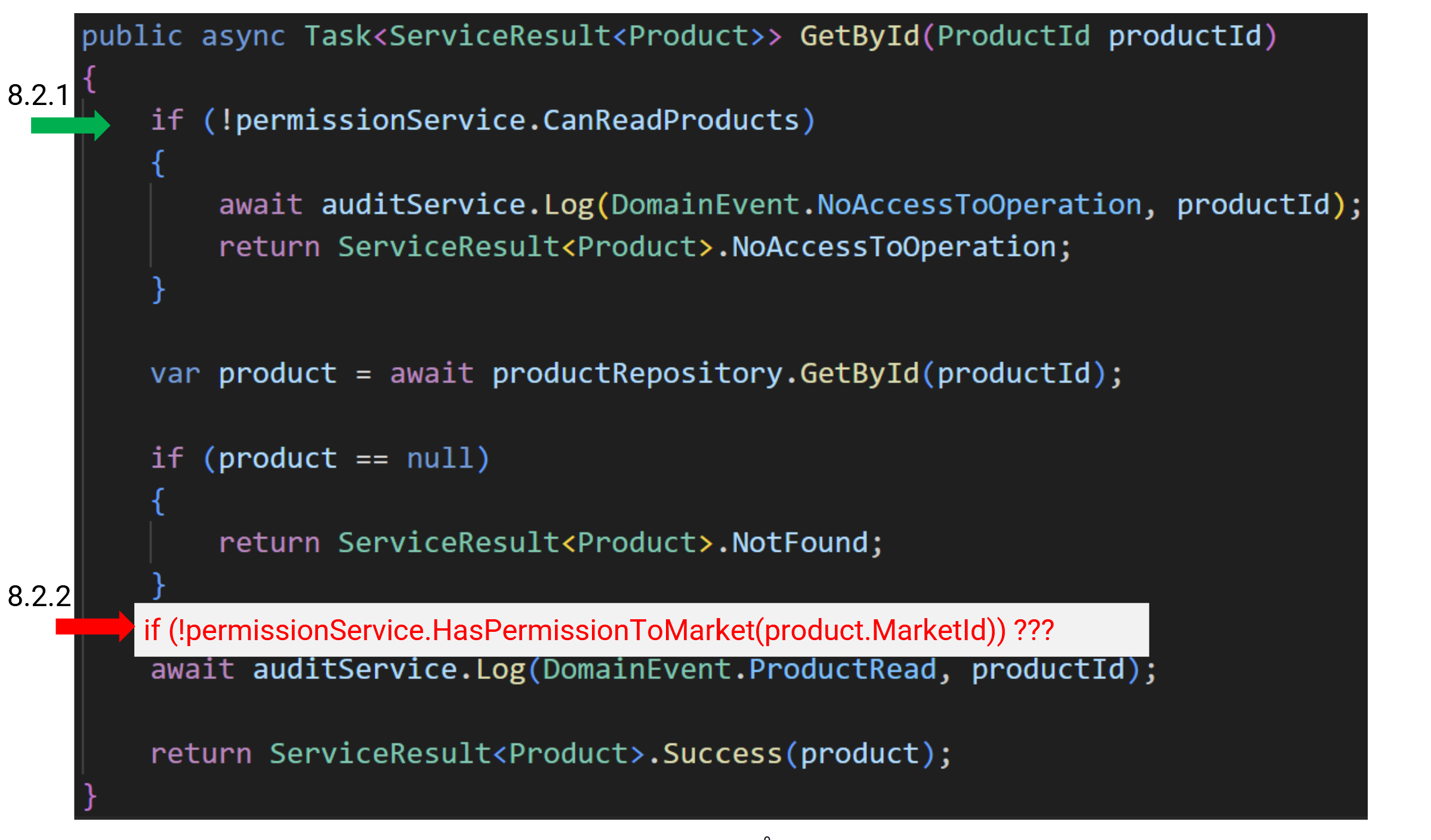

Here is an example of how basic authorization requirements can be applied to a simple GetById endpoint (from the GitHub repository) to verify function level access control (ASVS 8.2.1) and data level (ASVS 8.2.2).

The code below checks 8.2.1, but what about 8.2.2?

It is missing. Is this a security issue? That depends on the access model and what kind of clients are granted access to this endpoint.

For our example API we have the requirement:

A caller shall only be able to access products from allowed markets.

Thus, the missing code is a severe security issue, a so-called BOLA (No 1 on the OWASP TOP 10 for APIs).

How can a development process be defined to assert that bugs like this are caught before deploy?

A common (and mostly manual) approach is mandatory security reviews for each change, using well-documented checklists and definition of done instructions. Perhaps this is enforced using GitHub branch protection and multiple mandatory GitHub Pull Request reviewers.

So, when all checks are green, it is fair to say that the change is secure and can be deployed.

OWASP ASVS and similar security frameworks are valuable and comprehensive resources, well fitted for security specialists verifying application security posture.

A downside of this way of working is that security specialist reviewers often become a bottleneck, and that the review is performed late in the development process.

It works in many organizations using e.g. security champions and awareness programs. But, often it is hard to scale, leading to severe security bugs not being found until after deploy to production, perhaps during a penetration test.

An obvious way to improve scalability is to let automation support the defined (manual) secure development process.

Common tools are test automation (as shown in the GitHub repository) and security scans (including e.g. SAST and DAST). Preferably, this is part of the CI/CD pipeline, integrated with repositories and IDE:s for instant feedback to developers.

If implemented properly, this combination of automated tools and manual quality assurance can be reasonably fast, cover many security aspects and assert high-quality software.

But even if traditional scanning tools improve (with fewer false positives and negatives), they are not a perfect fit for developers’ way-of-working. In particular for agent-driven workflows, where the prompt and instructions are the primary interface for changing code.

Tobias Ahnoff, Omegapoint

Note that traditional SAST/DAST tools overlap with capabilities from agents, which sometimes use existing tooling to perform code analysis. Time will tell if it blends together or if we will continue to run tools separately. For now, you can still benefit from running both traditional scanners and agents.

Next we will look at how we can improve this further using automation support from AI-agents.

AI-agent security reviews

Besides providing a better developer experience, we aim for agent reviews that also perform better, with fewer false negatives and false positives. For example, it should never miss vulnerabilities like BOLA-issues and it should be able to verify and explain application specific architectural patterns that are important for security. To achieve this, we need to capture the broad knowledge of application security specialists for the applicable context and threat model.

Tobias Ahnoff, Omegapoint

It is important to understand that full automation may not be a valid solution. For high-risk functionality, separation of duties might be required, mandating humans in the loop at some point before deployment. Or, perhaps high-quality business requirements demand both agent and human quality assurance.

A good start is to have this as part of application security knowledge sources:

- OWASP material, e.g. OWASP ASVS, Cheatsheets and Top 10 lists, for example for web applications and APIs

- Public blogs and books that are relevant and trustworthy, such as Secure by Design

- Internal IT-policies, system documentation (including e.g. access model requirements) and reference implementations.

In this article, we use Defense in Depth articles as example of a public source, the article Omegapoint CIS Control Verifications for Cloud Native Applications as internal IT-policies and the Defense in Depth GitHub repository as a reference implementation (aligned with OWASP ASVS and Secure by Design patterns).

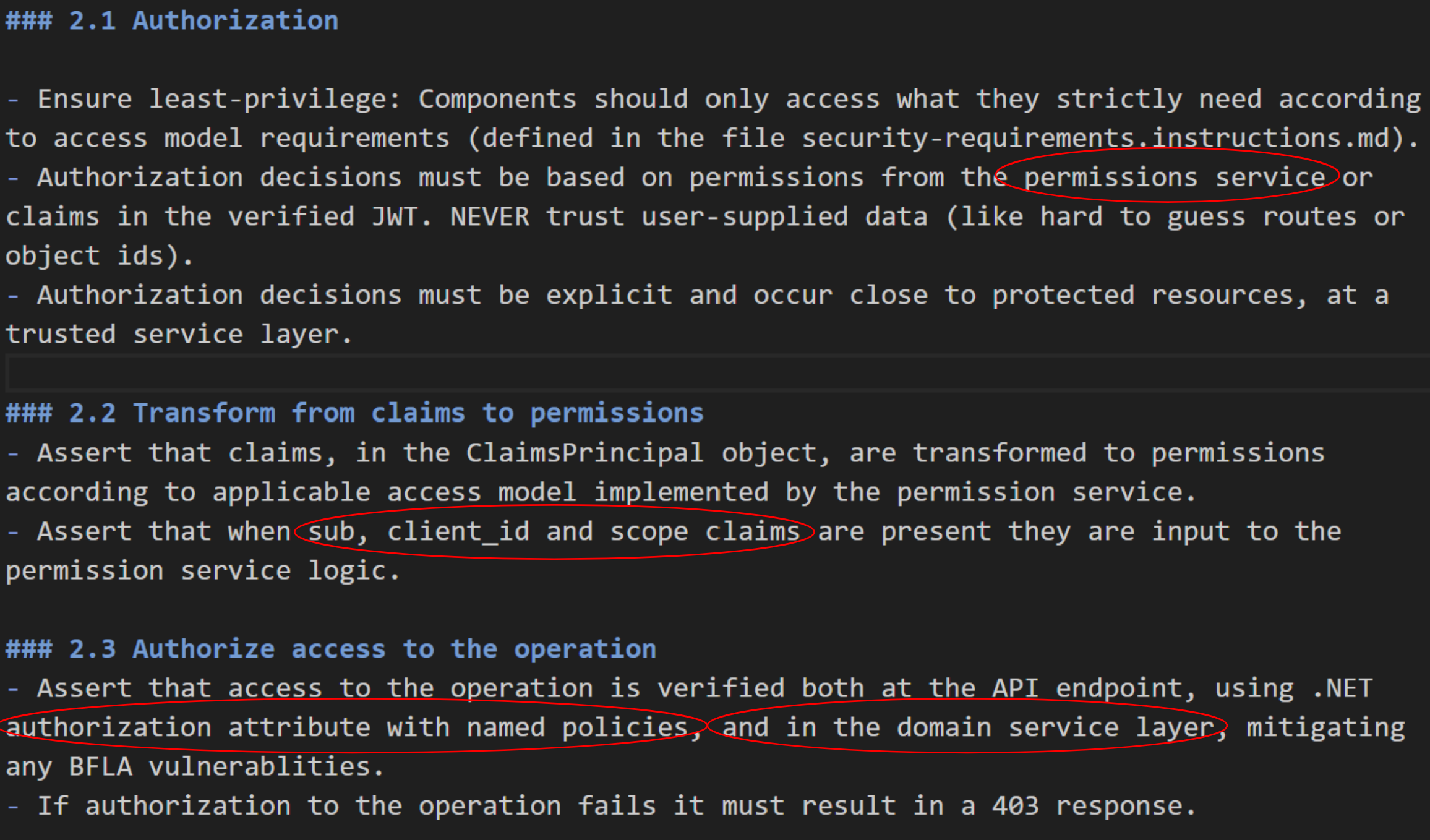

To enable an agent to use this knowledge, we need to aggregate and express it as agent instructions (perhaps organized as skills). Here is one example of authorization instructions that reflects the .NET reference implementation.

Most of the instruction text is generic application security, valid for many kinds of APIs, but the parts marked with red are implementation specific details that need to be adjusted according to context.

While these instructions are a good start, they are not enough to decide if there is a BOLA issue or not. Knowledge of the access model requirements is needed in order to verify proper authorization (having this documented is actually noted in ASVS 8.1.1).

For clarity, the instructions for security requirements are in a separate file. Only one line is needed to express the access model for this simple products API.

All Product API endpoints require that a client shall only access products for a given market.

But in a real-world API there are likely more requirements and domain model properties which need to be defined.

Together these instructions enable an agent to perform API endpoint security reviews, well integrated with developers’ way-of-working, initiated from the prompt when code is created, from a build step in a CI/CD pipeline or when reviewing changes in a GitHub Pull Request (given the proper licensing).

Note that key factors are:

- A context that is small enough, e.g. API endpoints, not all code in the repository (note the applyTo attribute in instruction files)

- The instruction captures patterns, domain model and security requirements, such as the access model requirements

Thus, the agent is not meant to be a generic agent finding security bugs in any code, but the instructions are scoped for specifically this kind of .NET API. By setting this boundary, we reduce the risk of false positives and negatives.

Tobias Ahnoff, Omegapoint

The AI area changes rapidly, and “what is a suitable context” and “which instructions are needed” will vary as models evolve. Fine-tuning this is important for quality over time. Nevertheless, having instructions that both humans and agents can read and understand has value, for example when onboarding a new colleague or as a reminder for current team members of how security is built in this project.

AI-agent compliance reviews

Another common task for security specialists is to assert compliance and show how the implemented application meets those requirements.

Compliance is often driven with a top-down approach, perhaps part of a certification process, where the organization invests in defining generic secure development procedures and policies to meet compliance requirements.

This can of course be verified manually, but that way of working often leads to a gap between compliance policies, awareness in DevOps teams and the code they produce. And many organizations struggle to bridge this gap and integrate compliance with secure DevOps.

One way to address this is to create an adapted security baseline with a set of security controls, based on compliance requirements and security best practices, which DevOps teams know how to apply.

One example we use is based on CIS Controls and has been published in the article Omegapoint CIS Control Verifications for Cloud Native Applications. A reason why CIS Controls is used, besides being a well-known security framework, is that it comes with mappings (from the CIS organisation) to e.g. ISO, DORA, NIS2 and PCI-DSS etc. (see CIS Controls Navigator). Given this mapping, the DevOps team can focus on building secure systems, aligned with the internal security controls, in order to also meet compliance requirements.

Tobias Ahnoff, Omegapoint

It is important to note that when systems are based on security principles, compliance is a byproduct of secure systems, not the other way around. The team should focus on building secure, high-quality systems, not just meeting the minimal set of requirements to be compliant. However, we do not devalue compliance requirements, which should typically be prioritized.

To automate this as part of our agent-driven development process, the agent needs instructions to map from identified security issues to security controls.

Besides making compliance visible, having security control references will make it easier for developers to verify “hallucinations” and also support security training and education.

For the Defense in Depth example API a custom-built MCP server is used to look up internal compliance references (see agents.md), but this can be implemented in many ways.

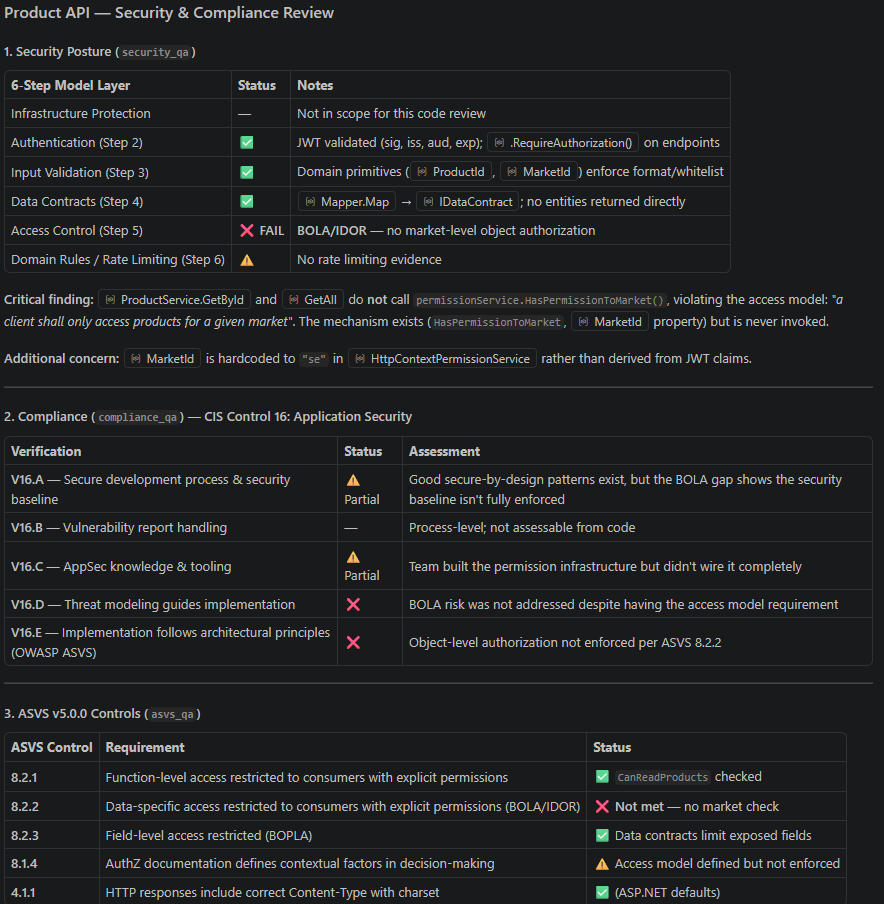

Here is an example of an agent review for the vulnerable GetById endpoint (in the Security Code Reviews section).

This is a review of high quality, but one OWASP ASVS mapping is not correct. If we look closely into OWASP ASVS 8.1, there are 4 requirements for documentation:

- 8.1.1, address requirements for function level and data level access

- 8.1.2, address requirements for field level access

- 8.1.3, address environmental and contextual attributes that are used in the application to make security decisions

- 8.1.4, address how environmental and contextual factors are used in decision‑making, in addition to function‑level, data‑specific, and field‑level authorization

As noted earlier in this article, 8.1.1 is the most relevant requirement for data level access control documentation. Why does the agent map to 8.1.4 (and not 8.1.1)?

Hard to say exactly, it depends on model and prompt (this example was with Claude Sonnet 4.6), but it also varies from time to time using the same model and prompt. The risk of “hallucinations” could be reduced by narrowing context and improved prompts (e.g. by adding that ASVS levels should be prioritized). But by design, AI is not deterministic.

However, this was just a small detail. It is a high-quality security review, the BOLA is identified and that seems to be correct for every agent review (given this context and newer models). But if the output is not verified and just accepted as proof of meeting all requirements etc., it could have severe consequences. Always keep a human in the loop!

Summary

With this article and repo we have shown how to build an API that is both secure and compliant by design, using automation support from AI-agents.

Just as the tests in Defense in Depth as Code: Test Driven Application Security, the agent instructions that were added will help us understand and assert security and quality over time. Understanding how an application works and keeping complexity under control is crucial for security, this is well put by Bruce Schneier, 1999 1 :

“You can’t secure what you don’t understand” and “The worst enemy of security is complexity”

Security is hard. For API application code, a good way to start is to address these 10 questions. And given a well-defined code change, it often isn’t that hard to do!

- Is authentication and authorization enforced according to the principles of least privilege, zero trust and defense in depth?

- Is input validation applied according to trust boundaries and domain logic?

- Is output encoding applied according to trust boundaries and context?

- Are there any outdated or vulnerable dependencies?

- Are there any unnecessary or high-risk dependencies (e.g. without reliable ownership) that can be replaced?

- Does the code or configuration contain or expose sensitive data, such as personal data, tokens or credentials?

- Can business logic be abused, e.g. for denial-of-service attacks?

- Are security-relevant events, errors and exceptions logged?

- Are errors and exceptions handled securely without leaking internal implementation details?

- Are there any tests with focus on security, e.g. negative tests for authorization logic and input validation?

For good support from AI-agents, context is important. Multiple dedicated agents with targeted skills and instructions will likely perform better than a few generic ones.

Key lessons learned:

- Adding access model details enables e.g. BOLA detection

- Domain-driven design (DDD) and Test-driven design patterns (TDD, in particular negative security tests) fit well with AI-agents and automation

- OWASP ASVS is a good start for agent instructions and skills

As a final note it is important to understand the non-deterministic behaviors of AI-agents and how output from them might change with new models and behavior. Always remember that:

- Humans in the loop (at some point) is a must to assert quality

- Humans are always responsible for the consequences of deployed code

But agents are of course a good automation tool which, when used properly, can improve security and quality at scale, and also help bridge the gap between code and compliance.

Further reading

Defense in Depth as Code: Secure APIs by Design

Defense in Depth as Code: Test Driven Application Security